Cognitive biases, flawed reasoning, fallacies and denial dominate opinions and decision making

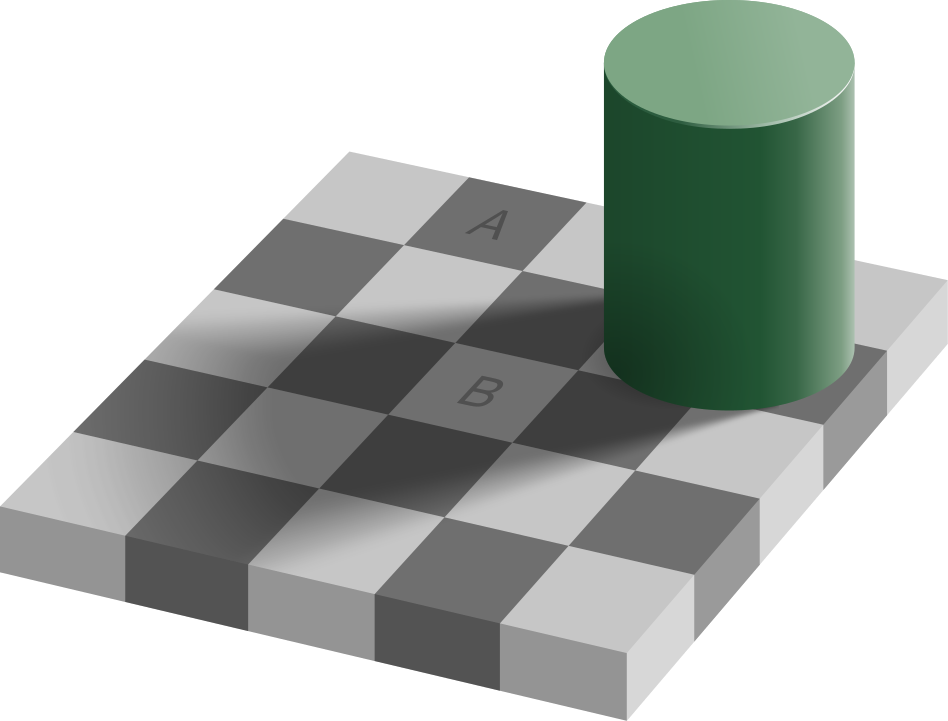

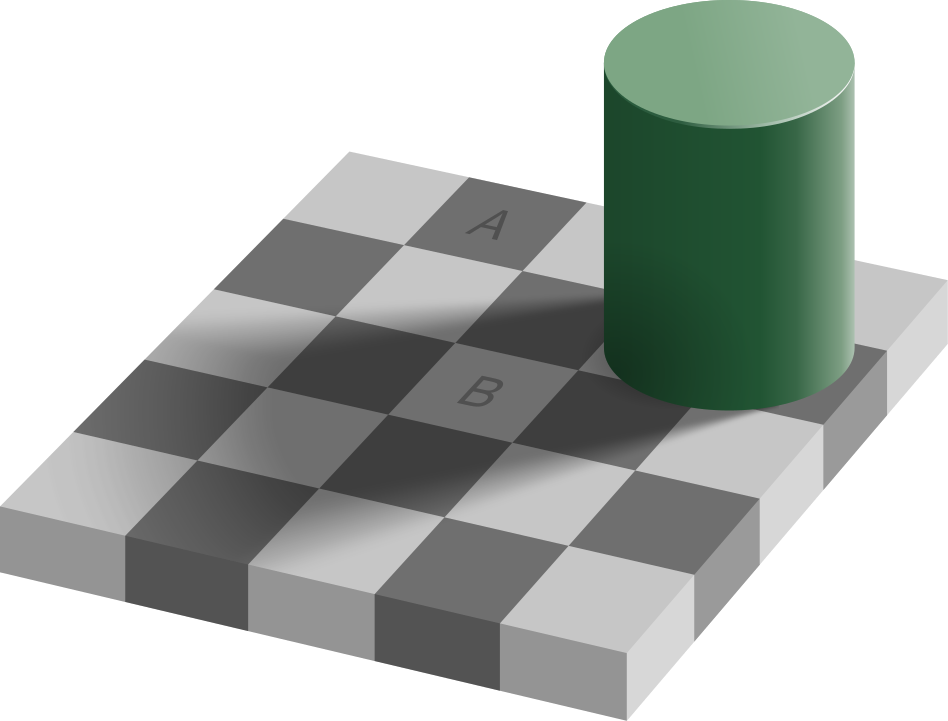

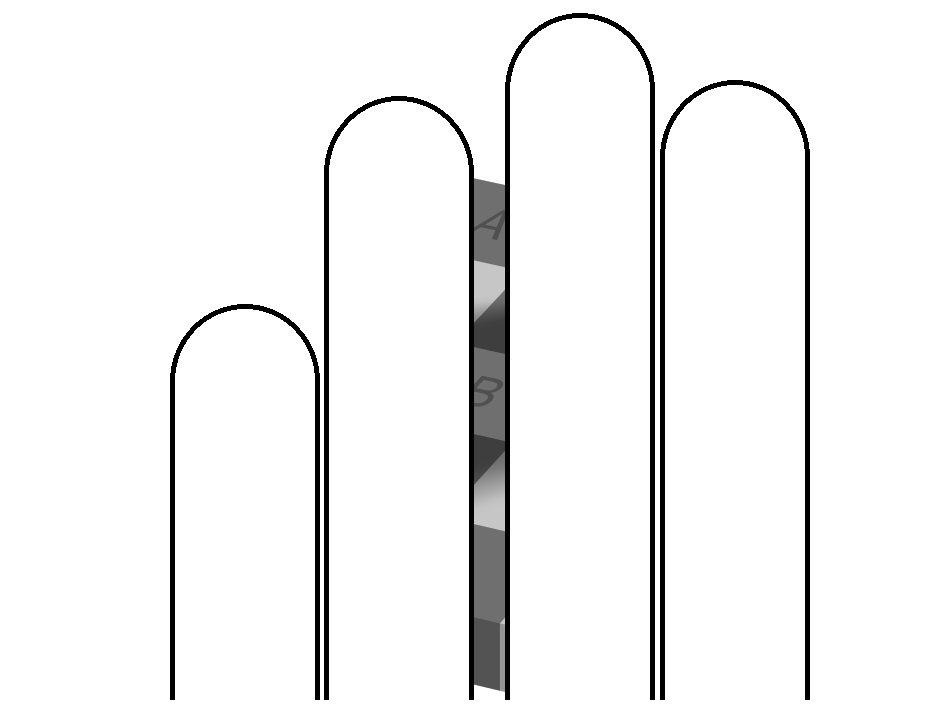

Things that appear to be obvious are not necessarily true. Despite appearances, the squares marked A and B in the optical illusion are actually the same shade of grey!

See here

Attribution: Edward H. Adelson: Checker shadow illusion

Attribution: Edward H. Adelson: Checker shadow illusionPeople are generally unaware of them and can be misled into having strong opinions on things they know little about.

Kinds of misthinking include

- cognitive biases

- flaws in reasoning

- fallacies

- denial.

Flaws in understanding the world

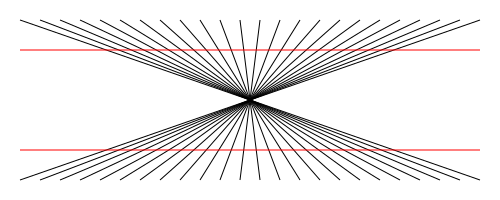

Although people have a strong impression that they see the world as it is, there are in reality many ways in which their brains' attempts to make sense of the world can end up misleading them. The Hering illusion

Attribution: Mabit1

Attribution: Mabit1 A second example is that at one time it was thought that the sun orbited the earth. This view was so strongly held that Galileo was prosecuted by the Roman Catholic Church, was threatened with torture, and at his trial was sentenced to house arrest for the rest of his life for merely discussing the alternative heliocentric view of Copernicus [3].

Cognitive biases

Some more subtle flaws in mental processing are the cognitive biases.Wishful thinking (optimism bias) will be familiar to most people, both in their own behaviour and in the behaviour of others.

Overconfidence is widely known - with a common example being that many surveys have found that most drivers think they are better-than-average drivers, which could not possibly be true. For example, in a 2018 survey, 73% of US drivers considered themselves to be better-than-average drivers [4]. Overconfidence is considered to be the most damaging bias by Daniel Kahneman, who was awarded a Nobel Prize for his work on cognitive biases [5]. Overconfidence is particularly dangerous if people are unaware of it and take no precautions to combat it.

The Dunning-Kruger effect was discovered by researchers Dunning and Kruger in their work on overconfidence. They found that while people are generally overconfident in our self-assessments, those who are least able are the most overconfident in their ability. Put simply, people don't know what they don't know, and the less they know, the less they are aware of their ignorance. Interestingly, those who are most able actually underestimate their own ability, and experts on a subject tend not to realise how much more they know than the general population, which can lead to communication difficulties.

Groupthink / herd mentality

Groupthink was described by Janis as "the mode of thinking that persons engage in when concurrence-seeking becomes so dominant in a cohesive ingroup that it tends to override realistic appraisal of alternative courses of action" [6]

There are many other cognitive biases, including confirmation bias - Wikipedia lists dozens [7]

Research flaws

There is a large literature on how to avoid research errors

Sample size

One common misunderstanding is over the size of survey samples, with many people believing that if a sample size is large, then the results will be a reliable reflection of the whole population. This is true only if the sample is representative of the whole population, and is not true if the sample is biased.

Interpretation of associations

A second common misunderstanding is the fallacy that association implies causation. An example is that patients admitted to hospital at a weekend have a higher mortality than those admitted during a weekday. A simplistic conclusion is that hospital care is worse at weekends, but a proper analysis takes account of patients admitted at weekends being sicker than those admitted during weekdays [8]. It is crucial that policy decisions are not based on analyses that include basic errors such as these.

Raising standards

There is an extensive literature on research errors and how to avoid them. The large number of potential pitfalls means that much care is needed in carrying out research and translating it into practical application. However, non-professionals such as journalists, politicians and social media commentators often carry out and publish simple flawed analyses without being aware of the difficulties.

Flaws in reasoning

There are numerous fallacies in debating that can contribute to wrong decisions being made, e.g.- the straw man fallacy

- an ad hominem attack.

Denial

Climate denial can be categorised [9][10] as- literal denial: outright denial of facts

- interpretive denial: denial of personal and global outcome severity

- implicatory denial: acknowledgement of facts but denial of their implications, e.g. avoidance, denial of guilt, rationalization of own involvement.

Conclusions

The consequence of all the cognitive biases and other flaws in understanding and discussing the world is that it is easy to draw a wrong conclusion. Once groupthink is involved, it is easy for people to form groups that distrust other grops, and for people to become polarised. Add in unscrupulous politicians and a biased media and fallacies can become established and entrenched.Those with different views to a group can then be dismissed as

- ignorant and/or stupid

- corrupt

- doommongers

- trouble makers.

The end result is a failure to reach a consensus and wrong decisions. This is not a good way for society to approach difficult problems.

References

| [1] | Edward H. Adelson: Checker shadow illusion https://en.wikiversity.org/wiki/File:Checker_shadow_illusion.svg |

| [2] | Attribution: https://commons.wikimedia.org/wiki/File:Hering_Taeuschung.svg shared under the Attribution-Share Alike 4.0 International licence |

| [3] | Jessica Wolf (2016) The truth about Galileo and his conflict with the Catholic Church https://newsroom.ucla.edu/releases/the-truth-about-galileo-and-his-conflict-with-the-catholic-church, viewed 21.1.2022 |

| [4] | American Automobile Association survey (2018) https://newsroom.aaa.com/2018/01/americans-willing-ride-fully-self-driving-cars/, viewed 19.1.2022 |

| [5] | Daniel Kahneman: "What would I eliminate if I had a magic wand? Overconfidence" (2015) https://www.theguardian.com/books/2015/jul/18/daniel-kahneman-books-interview |

| [6] | Kopp (2016) Avoiding the Pitfalls of the Dunning-Kruger Effect and Groupthink https://users.monash.edu/~ckopp/archive/PAPERS/Seminar-DKE+Groupthink-2016.pdf |

| [7] | https://en.wikipedia.org/wiki/List_of_cognitive_biases |

| [8] | Emergency care in hospitals is as good at the weekend as on weekdays (2022) https://evidence.nihr.ac.uk/alert/hospital-emergency-care-is-as-good-at-the-weekend-as-on-weekdays/ |

| [9] | Stanley Cohen (2001) States of Denial: Knowing about Atrocities and Suffering ISBN: 978-0-745-62392-4 |

| [10] | Wullenkord MC (2022) From denial of facts to rationalization and avoidance: Ideology, needs, and gender predict the spectrum of climate denial https://www.sciencedirect.com/science/article/pii/S0191886922001209?via%3Dihub |

Appendix: Checker shadow illusion

First published: 16 Jan 2022

✖

✖